Improving 10G Performance

When you first make the jump from 1G Ethernet to 10G you will be both impressed with the speed jump and also a bit disappointed that you can’t actually send a full DVD (8GB) in a second.

Bottlenecks

To get better 10G speed will require resolving many input/output bottlenecks on your network ranging from your disk drives being able to read data fast enough to fill the network card write buffer.

IO Disk Speed

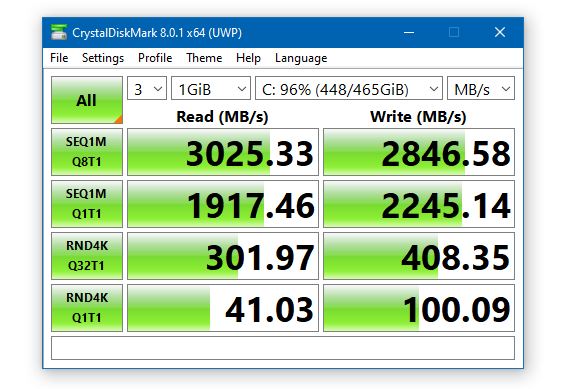

With 10G connections, you might run into a theoretical impossibility that a spinning 6G SATA drive cannot possibly fill a 10G connection no matter what settings you give your NIC. The best way to see if this is your bottleneck is to run benchmarks.

CrystalDiskMark (Windows Only) is a nice tool to see read/write speeds

BlackMagic Disk Speed Test (Mac – Windows/Linux is apart of their Desktop Video download)

hdparm (Linux CLI)

sudo hdparm -t /dev/nvme0n1

/dev/nvme0n1:

Timing buffered disk reads:

5584 MB in 3.00 seconds = 1860.73 MB/sec

/dev/sdd:

Timing buffered disk reads:

222 MB in 3.08 seconds = 72.01 MB/secIn the command above, You will have to swap out the /dev/nvme0n1 to your actual drive, run lsblk if you don’t know.

If after getting your benchmark speed and realize that your bottleneck is your disk, you can upgrade the drives.

- Spinning Disk ~100MB/s (0.8gbps)

- SATA SSD ~500MB/s (4gbps)

- NVMe SSD ~2,000MB/s (16gbps)

If you use ZFS or other storage solutions you can enable an ARC (adaptive replacement cache) that uses physical ram as a cache, if the files are in memory your read/write speeds can be up in the DDR4 range of ~30,000MB/s (240gbps) speeds. But chances are your files won’t be and your read speed will be of the backing storage but your write speed will be fast.

Jumbo Frames

Out of the box, most devices do not enable Jumbo frames which means each packet can only be up to 1500 bytes. By raising this number, you allow more data to be encoded in each packet, normally Jumbo Frames are set to 9000 bytes. While this 7500-byte increase might not sound like a lot, it gives a 6x increase which is more impressive when looking at transfer speeds of 100MB/s to a more impressive 600MB/s.

This is the change that you will feel the most and why this isn’t a default on 10G NICs is beyond me.

Unfortunately, this change isn’t a one click solution, you need to check every device between the two computers.

LINUX

nmcli

enp1s0: unmanaged

"Intel 82599"

ethernet (ixgbe), 80:61:5F:04:7C:44, hw, mtu 1500

sudo ifconfig enp1s0 mtu 9000

sudo vi /etc/netplan/50-cloud-init.yaml

network:

ethernets:

eno1:

dhcp4: true

enp1s0:

dhcp4: true

mtu: "9014"

version: 2(then restarting is the easiest way to make sure this is applied. Also match whatever mtu other systems on your network are using)

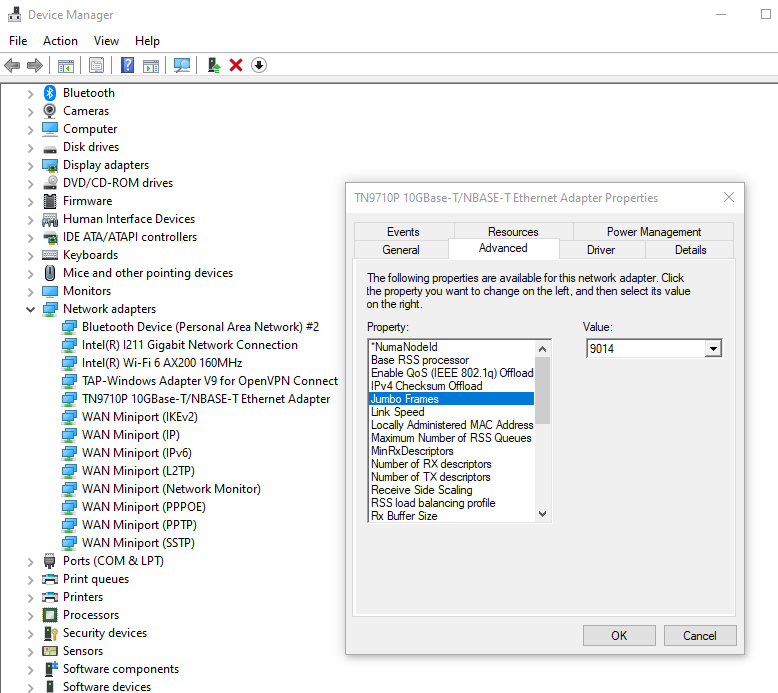

WINDOWS

In Device Manager, find your Network Card and go into its Advanced options. Some cards will have entries for Jumbo Frames while others might just call it MTU.

NETWORK SWITCHES

Most switches will not be a blocker to jumbo frames as MTU is more of a router setting, but it’s worth checking to see if it has an option for jumbo frame. If you have managed switches, you might need to log into each of them to see if MTU or Jumbo Frame is disabled. If you have unmanaged switches you can just hope for the best. Once you get your endpoints set to use Jumbo Frame, you can use an oversized ping to see if it fragments it.

ping 192.168.1.194 -f -l 8960

Reply from 192.168.1.194: bytes=8960 time<1ms TTL=64

Pinging 192.168.1.194 with 8968 bytes of data:

Packet needs to be fragmented but DF set.You can raise or lower the 8968 number to see what your affective MTU is. Even though your MTU might be set to 9000, you won’t be able to set the ping payload to 9000 because with the packet headers it will push it over 9000. Really use this ping to check to see if a device on your network is limiting you to 1500.

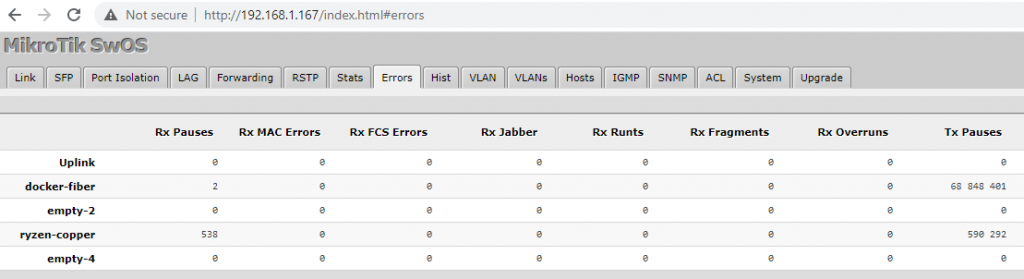

TX Pause

On split speed networks, the unfortunate reality is data is being sent from high-speed devices and the network has to intermix lower-speed traffic, and sometimes it just cannot keep up. TX Pause is flow control command pleading to the transmitter to slow down. If packets continue, the switch will eventually start dropping which might cause your machine to mark the adapter as down. You can see if this is happening on your network in your switch’s statistic view.

To fix this is difficult. Ideally you don’t have a split network. You can turn off flow control but instead of TX Pauses you will just get dropped packets.

Scroll to the bottom and comment if you have any more ideas to speed up your 10G network. This article doesn’t get into RX and TX buffer/descriptor sizes, but tuning those tend to be very payload specific and will improve some types of transfers and worsen others.